New Feature

Introducing: Visual Intelligence

—

Aaron Cannon

AI-moderated research has always been a listening technology. It helps you listen to humans at scale, conducting hundreds of interviews at once, probing dynamically based on what participants said, and synthesizing findings automatically. It unlocked something qualitative research could never do before: scale. But it was just the beginning.

Because listening only gets you so far.

The hesitation before a click, the flash of confusion on someone's face, the way a person moves through a store. That context has always existed, it just lived outside the reach of any interview platform.

Until now.

Introducing Visual Intelligence: a new suite of capabilities that gives Outset's AI-moderator eyes. It monitors screens, reads facial expressions, and analyzes real-world photos and live video in real time. It uses what it sees to follow-up more dynamically.

And it surfaces behavioral, emotional, and physical signals automatically in your analysis so you can make more confident, context-aware business decisions.

This is the biggest thing we've ever shipped.

Why This Matters

The say-do gap isn't new. Researchers have known for decades that what people say in an interview and what they actually do, feel, or experience aren't always the same.

The hesitation before clicking. The micro-expression before someone says "yeah, I like it." The way a shopper moves through a store versus the route they describe afterward.

Bridging that gap has always required more researchers, more time, more tooling stitched together. Screen recordings reviewed manually. Video footage scrubbed through. Separate tools for separate signals.

Visual Intelligence changes the equation. The same AI that's conducting your interview is now also watching, interpreting, and reacting, in real time.

Three New Capabilities

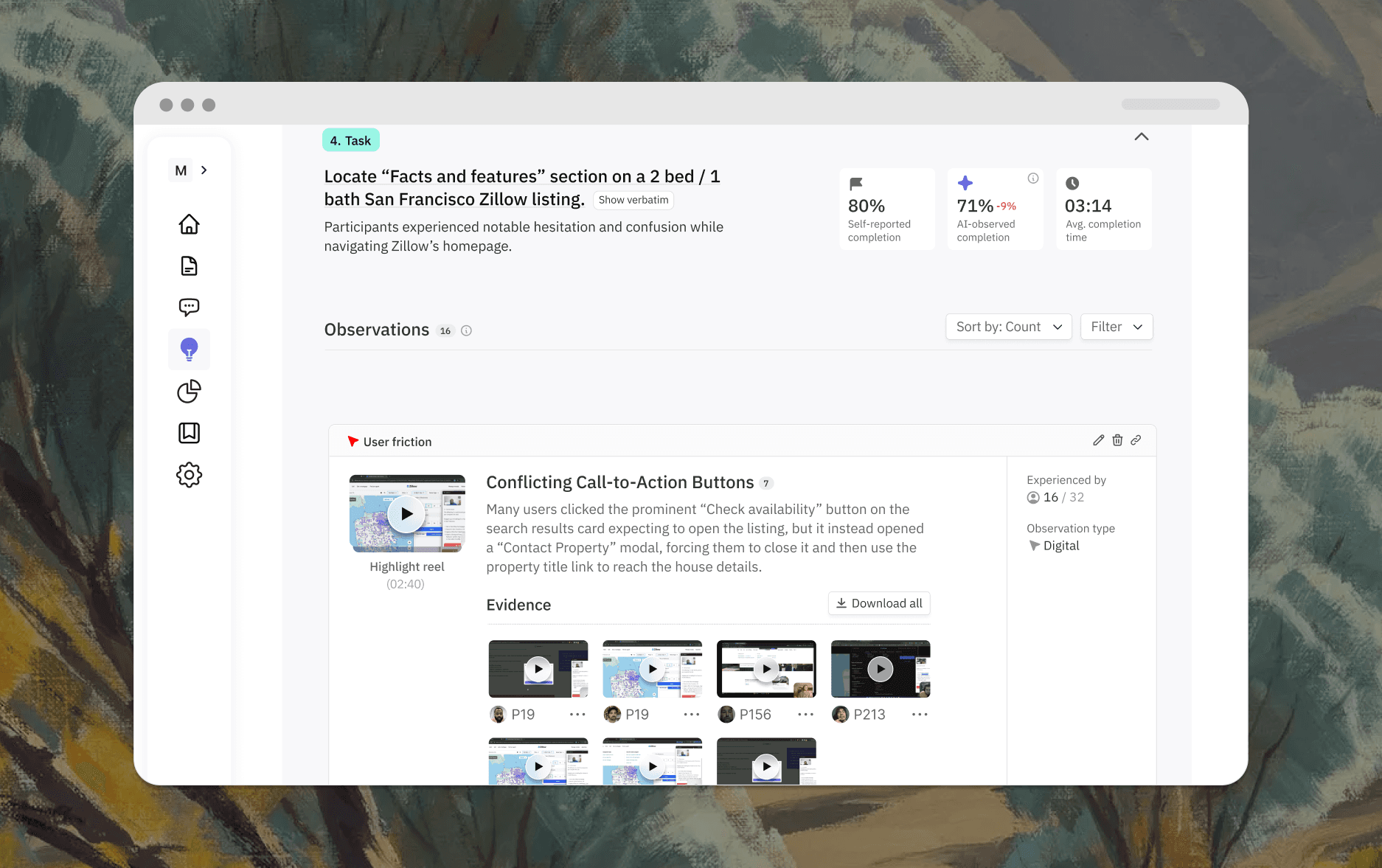

Digital Intelligence

During usability studies, Outset's AI silently monitors participant screens, tracking clicks, dead ends, backtracking, navigation paths, and hesitation as they unfold. It probes more precisely based on what it observes, and surfaces prioritized observations automatically in your insights. Outset handles the screen recording review you used to do manually.

It’s not just the future of unmoderated, it makes traditional unmoderated usability obsolete.

Good for:

Prototype and live product testing

Identifying friction users never verbalize

Comparing self-reported vs. observed task completion

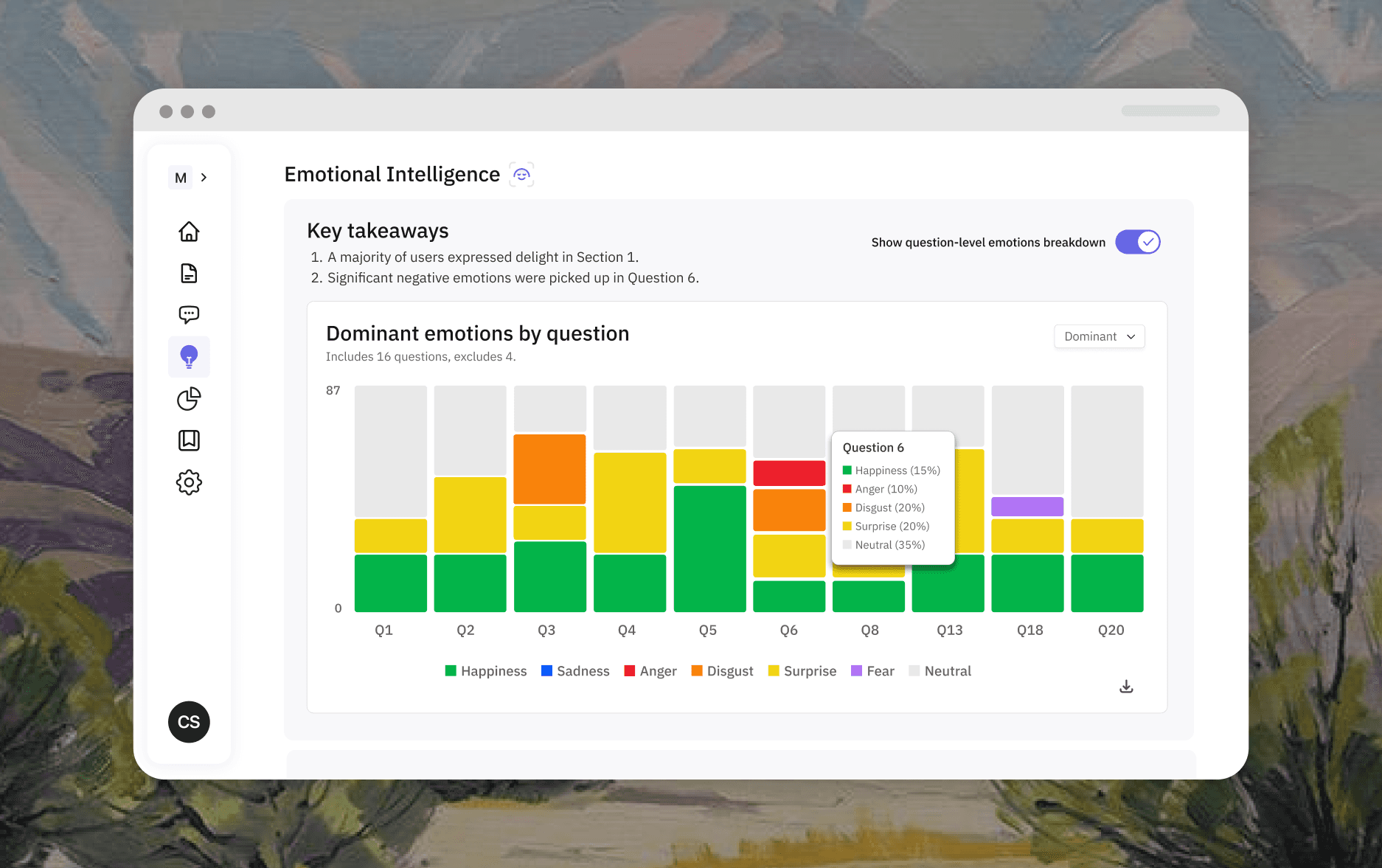

Emotional Intelligence

During video interviews, Outset detects facial expressions in real time, across the full emotional spectrum, and follows up on it. Signals are timestamped, annotated in transcripts, and surfaced in aggregate in insights. When a participant says they love something but their face tells a different story, you'll know.

Good for:

Concept testing

Creative and message testing

Any study where emotional resonance matters

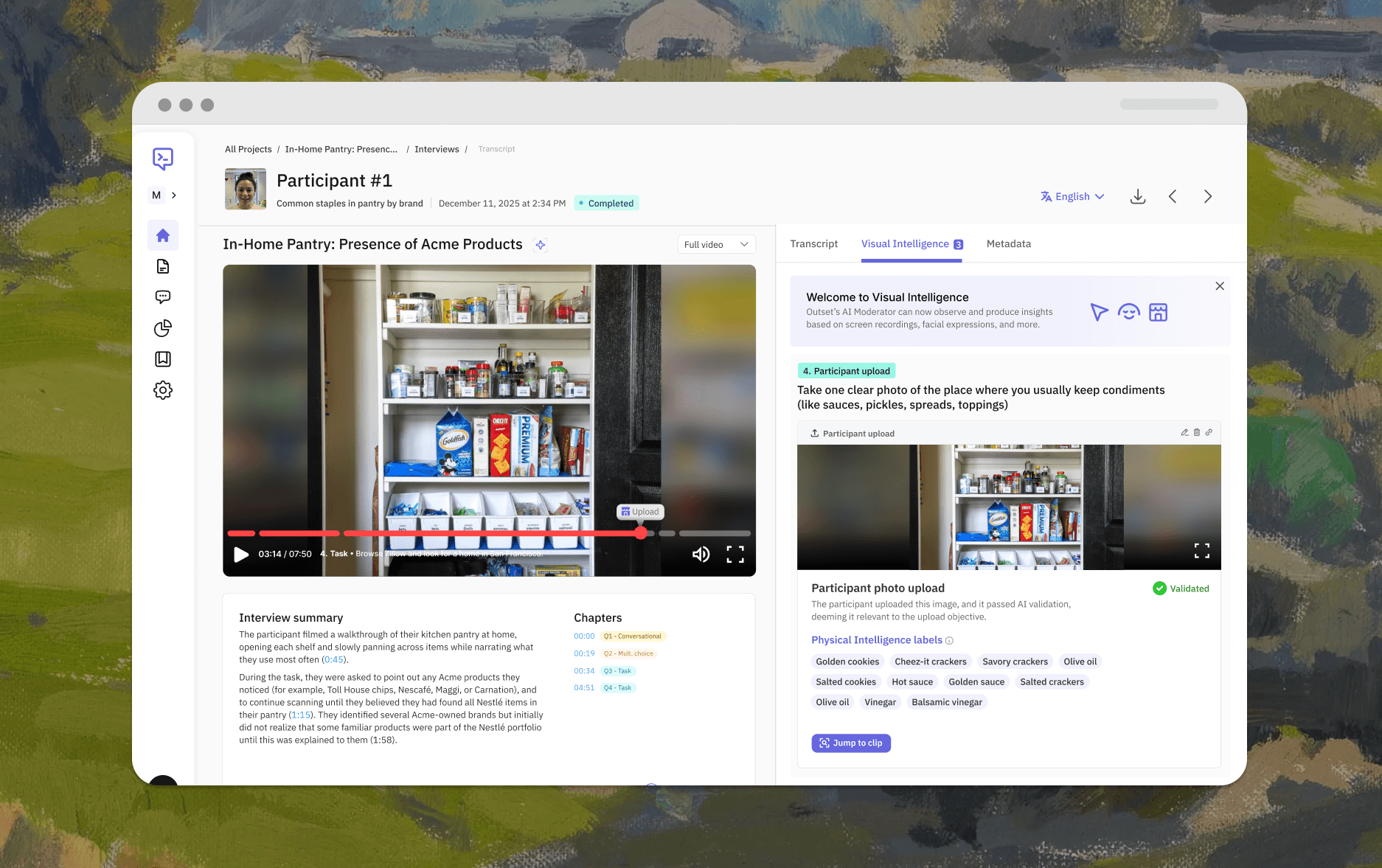

Physical Intelligence

Participants can now upload photos or stream live video of what they're seeing during the interview, directly inside the study. Outset's AI analyzes what it receives immediately and uses it as live context for dynamic follow-up, opening up research formats that simply weren't possible in an AI-moderated context before.

Good for:

In-home testing

Shopalongs and retail research

Packaging and unboxing studies

Out-of-home product experiences

This is the Next Era of AI-Moderated Research

First-generation AI-moderation was a leap forward: hundreds of high-quality interviews at once, dynamic probing based on what participants said, automatic synthesis.

That was the listening era.

Visual Intelligence is what comes next.

Research is about understanding the full human experience. Now you have a platform built to capture it.

Interested in learning more? Book a personalized demo today!

Book Demo

About the author

Aaron Cannon

CEO - Outset

Aaron is the co-founder and CEO of Outset, where he’s leading the development of the world’s first agent-led research platform powered by AI-moderated interviews. He brings over a decade of experience in product strategy and leadership from roles at Tesla, Triplebyte, and Deloitte, with a passion for building tools that bridge design, business, and user research. Aaron studied economics and entrepreneurial leadership at Tufts University and continues to mentor young innovators.