UX Evals

Evaluate AI through real user experience with Outset UX Evals

Taking traditional usability into the world of tokens and non-deterministic outcomes to modernize UX evaluation for real AI-driven user experiences.

Conceptualized by Microsoft's Copilot team, powered by Outset.

Leading research teams are using Outset to rethink how AI should be evaluated

Use UX Evals to understand AI experiences — not just outputs

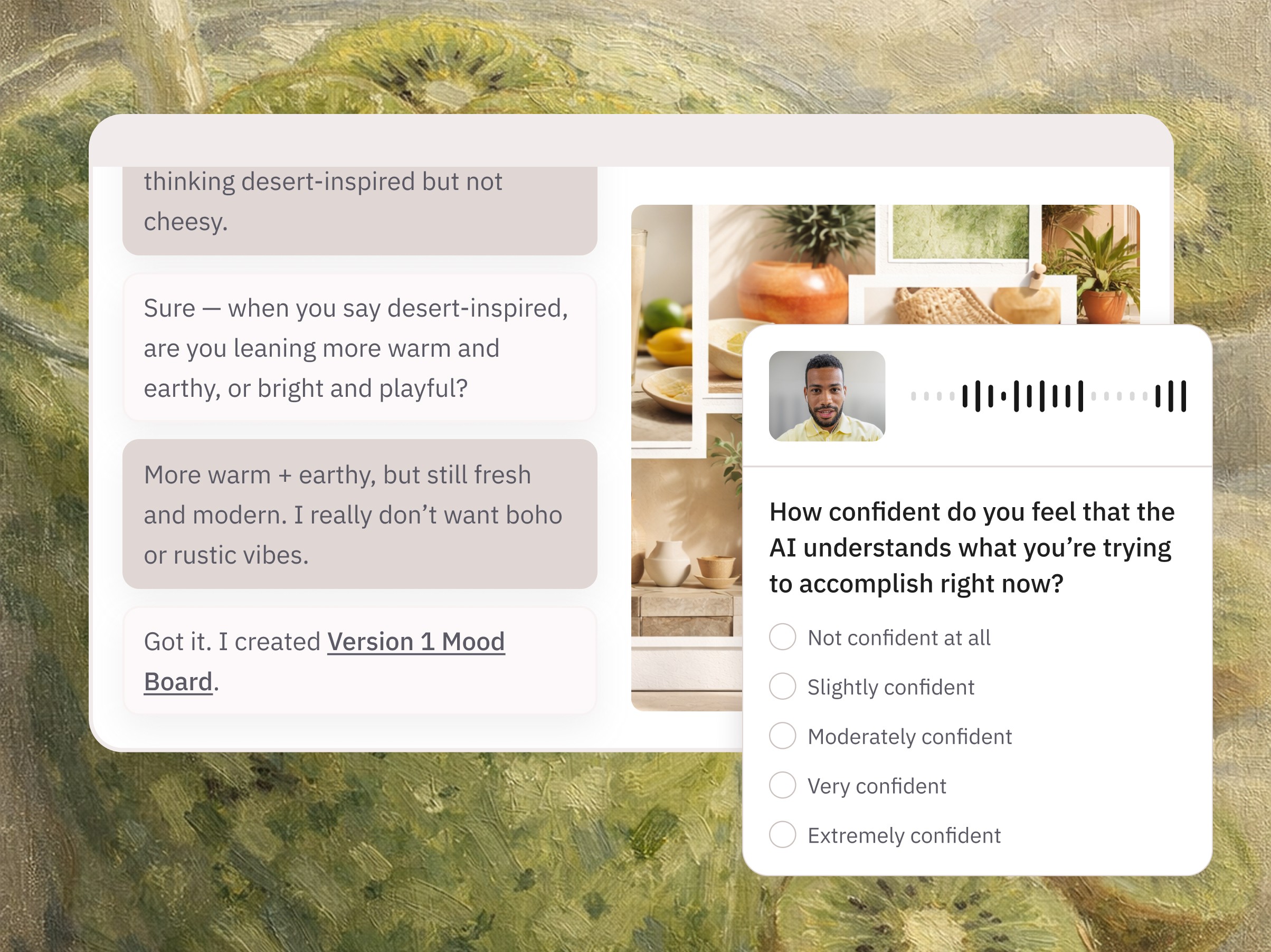

AI Experience Evaluation

Understand whether interactions with AI feel helpful and trustworthy to users through first-party user experience evaluation.

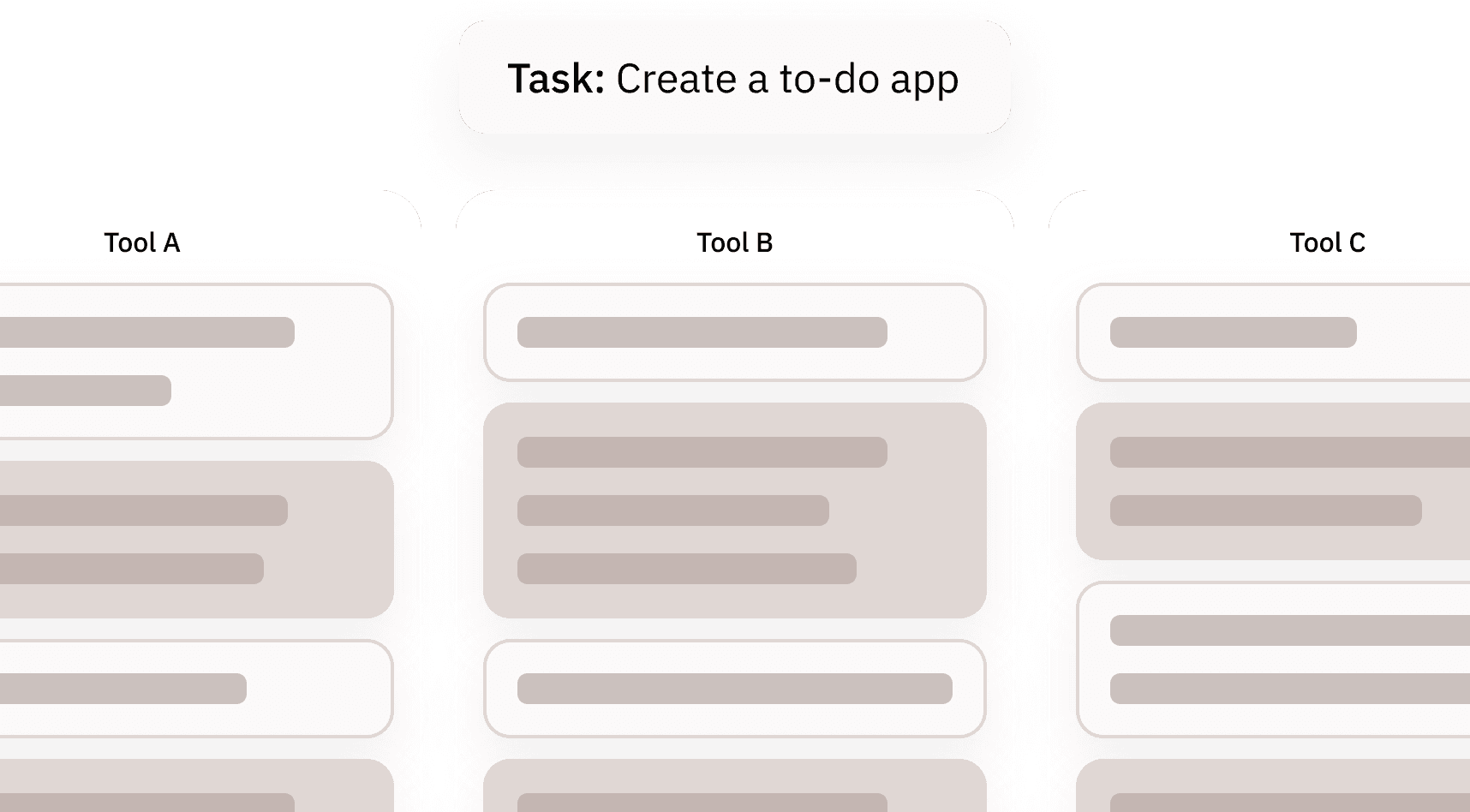

Model & System Comparisons

Compare AI systems and tools based on real user experience — not just benchmark scores.

Decision Support & Trust

Evaluate whether AI helps users make decisions, build confidence, or move forward.

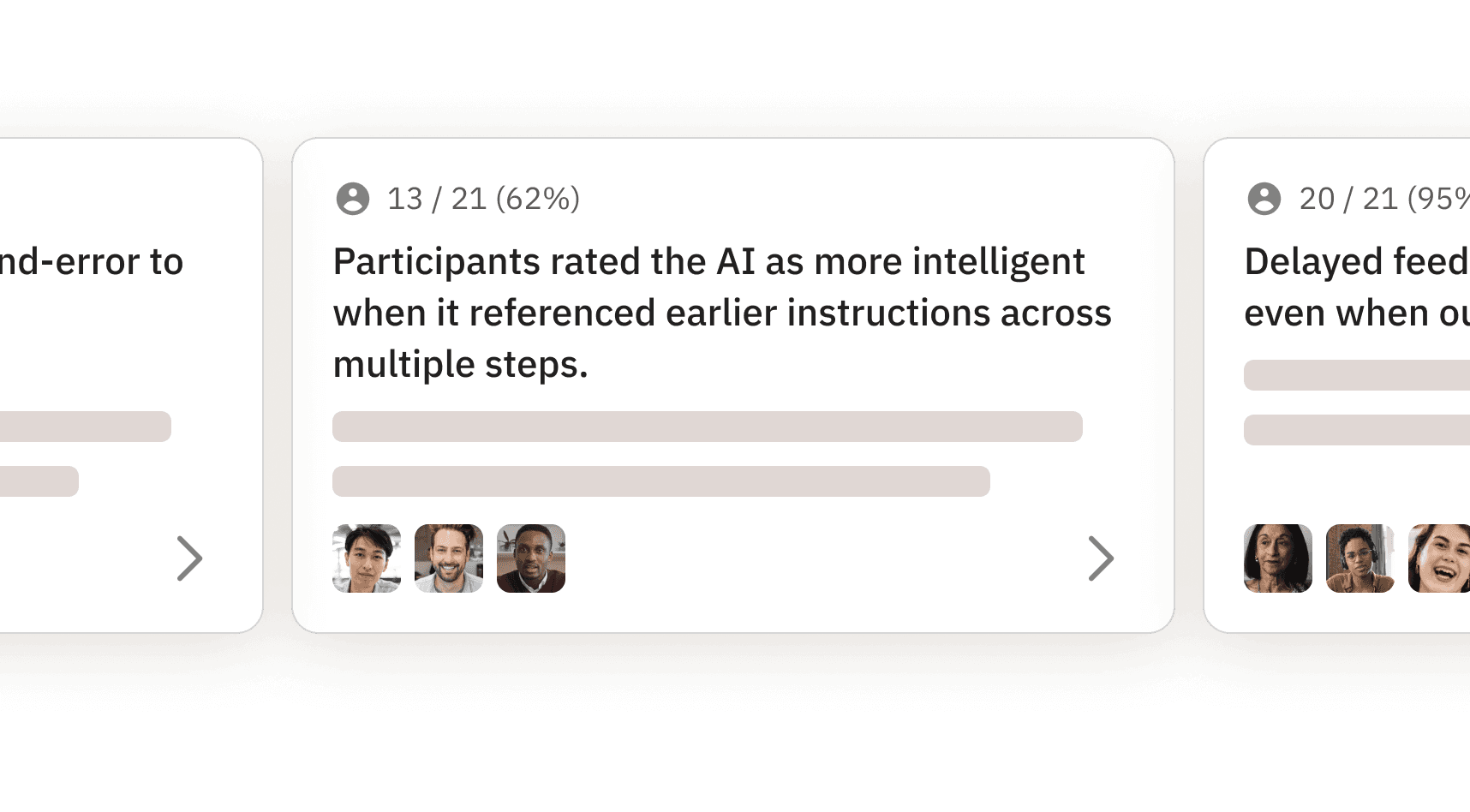

Uncover Consensus Through Scale

Every AI interaction is unique. UX Evals reveal shared patterns by scaling user experience evaluation across hundreds of real conversations.

The advantages of UX Evals

As products move from pixels to tokens, every user’s experience is highly unique to them.

AI evals and traditional usability fall short of deriving insights from first-person, multi-modal, multi-turn interactions.

UX Evals ground UX evaluation in how AI is actually experienced by users, across real conversations and real contexts.

UX Evals are built for real-world AI use

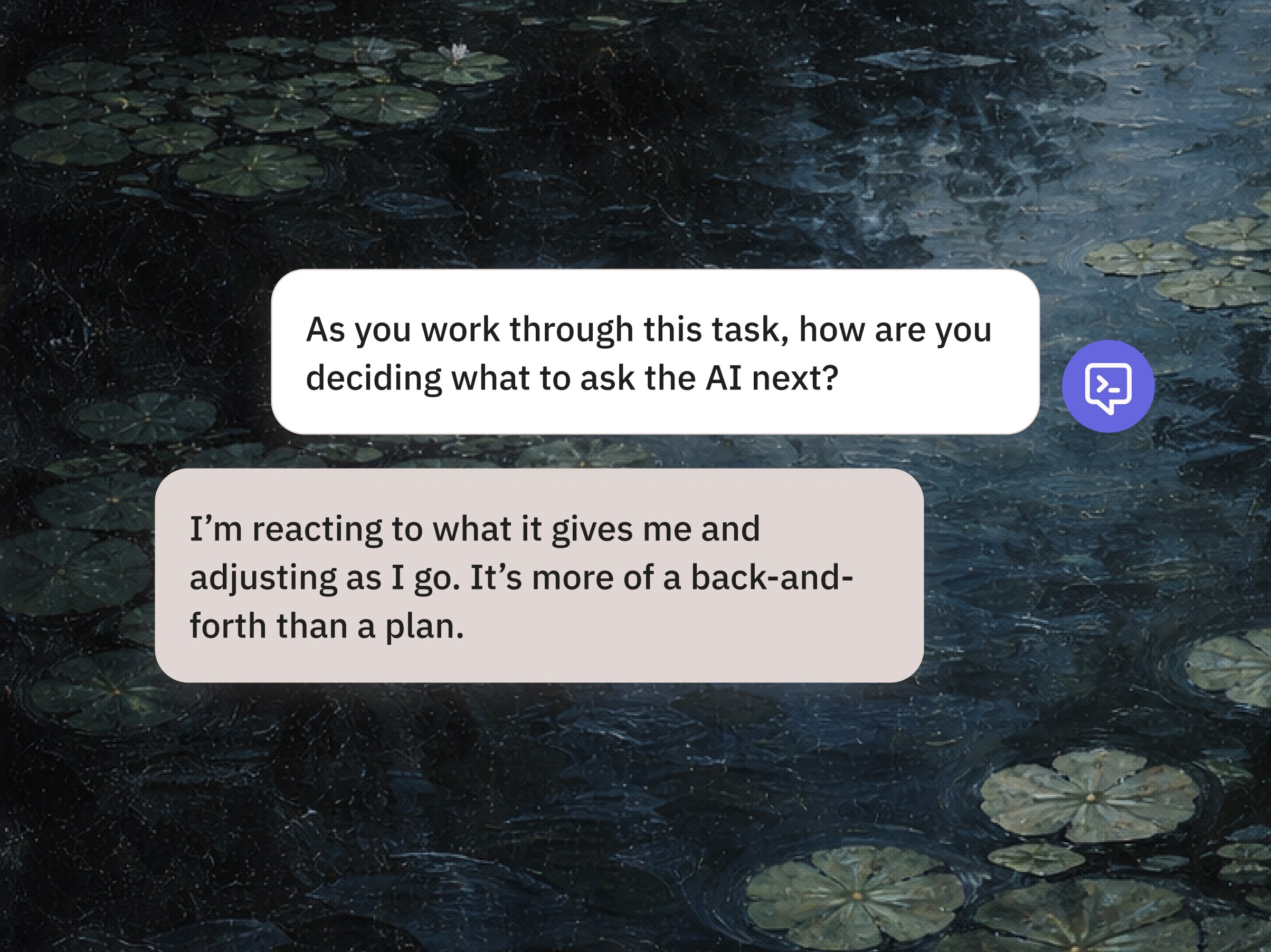

First-Person Conversations

Researchers bring their own goals, context, and questions — not pre-written prompts.

Multi-Turn Evaluation

Value is assessed across the entire conversation, not a single response.

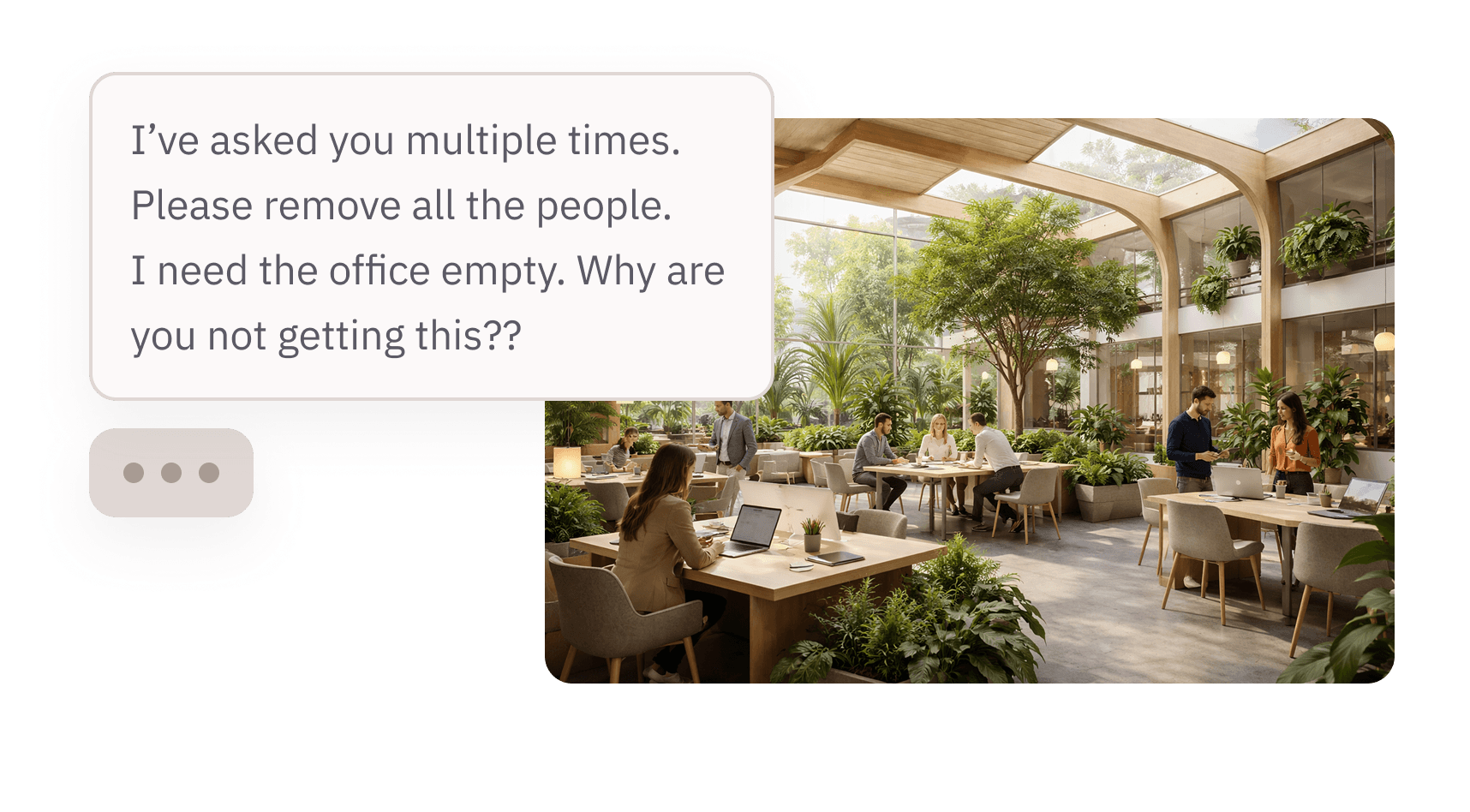

Real-World Conditions

Prompts are messy, imperfect, and emotional — just like how humans actually operate.

Scaled Qualitative Signal

Patterns emerge by observing experience across many users, not isolated anecdotes.

Why UX Evals go beyond traditional approaches

Beyond traditional AI evals

Machine evals and human graders test whether AI works against predefined criteria.

UX Evals test whether users actually prefer and value the AI’s experience — as judged by the user.

Beyond usability testing

Usability testing was built for static pixels and flows.

UX Evals are built for conversations — where outcomes are non-deterministic and value is subjective.

Resources for researchers running UX Evals

White paper

Introducing UX Evals

The “why” behind the net-new methodology, written by the Microsoft team that developed it.

Jan 22, 2026

Guide

Outset UX Evals: A How To Guide

A step-by-step resource for researchers looking to adopt.

Jan 22, 2026

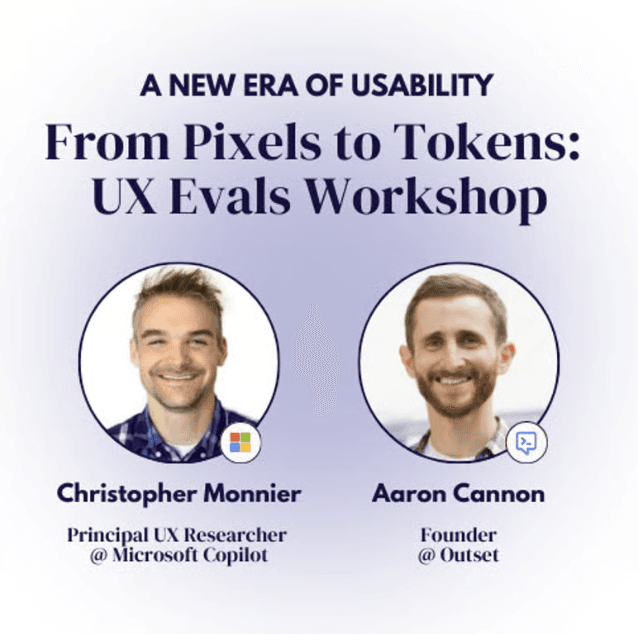

Event

From Pixels to Tokens: A UX Evals Workshop

A tangible workshop hosted by the team that developed this methodology at Microsoft Copilot on how to implement. Watch the recording here.

UX Evals Workshop (YouTube)

UX Evals FAQs

Answers to common questions about how AI can help with UX Evals.

What are UX Evals?

UX Evals Outset’s modern approach to user experience evaluation designed for AI systems. Instead of testing static interfaces or isolated outputs, UX Evals measure how users actually experience, trust, and collaborate with AI across real conversations.

How are UX Evals different from traditional UX evaluation?

Traditional UX evaluation focuses on predictable flows and interfaces. UX Evals are made to evaluate AI — accounting for multi-turn conversations, non-deterministic outputs, and subjective user value that emerge during real AI interactions.

Why is user experience evaluation important for AI products?

User experience evaluation helps teams understand whether AI feels helpful and trustworthy to users. UX Evals go beyond accuracy to evaluate AI based on real human experience, not just technical performance.

What makes an effective AI evaluation platform?

An effective AI evaluation platform supports first-person conversations, multi-turn UX evaluation, and qualitative insights at scale. Outset’s UX Evals are a set of AI evaluation tools that capture real user context, emotion, and decision-making, not just benchmark scores.

How do UX Evals help teams evaluate AI at scale?

UX Evals allow teams to evaluate AI by studying patterns across hundreds of real user interactions. By using Outset’s UX Evals AI evaluation tools purpose-built for conversational systems, teams can uncover shared experience signals without relying on synthetic prompts.